“When you judge others, you don’t define them, you define yourself” ~ Dr. Wayne Dyer

“When you judge others, you don’t define them, you define yourself” ~ Dr. Wayne Dyer

Is this quote accurate?

In Nine Lies About Work: A Freethinking Leader’s Guide to the Real World authors, Marcus Buckingham and Ashley Goodall, share all the performance evaluations, 360-degree feedbacks and surveys you’ve participated in, are based on the belief that people can reliably rate other people.

And they can’t.

“Over the last forty years, we have tested and retested people’s ability to rate others, and the inescapable conclusion—reported in research papers such as “The Control of Bias in Ratings: A Theory of Rating” and “Trait, Rater and Level Effects in 360-Degree Performance Ratings” and “Rater Source Effects Are Alive and Well After All”—is that human beings cannot reliably rate other human beings, on anything at all.

We could confirm this by watching the ice-skating scoring at any recent Winter Olympics—how can the Chinese and the Canadian judges disagree so dramatically on the scoring of that triple toe loop?—but instead, let’s take a look at the most revealing real-world study of our rating prowess, or lack thereof.

.jpg?width=300&name=Idiosyncratic%20Rater%20Effect%20-%2054%25%20due%20to%20rater%20(9%20Lies%20about%20Work).jpg) It was conducted by two professors, Steven Scullen and Michael Mount, and one industrial/organizational psychologist, Maynard Goff. They collected ratings on 4,392 team leaders, from two direct reports, two peers, and two bosses. These team leaders were rated on a combination of leadership competencies, such as “manages execution” or “fosters teamwork” or “analyzes issues,” with a short list of questions measuring each competency, for a total of just under half a million ratings from over twenty-five thousand raters…….by slicing and dicing the data the researchers hoped to see what best explained the overall patterns, and what they found was that most of the variation in people’s scores—54 percent of it—could be explained by a single factor: the unique personality of the rater.

It was conducted by two professors, Steven Scullen and Michael Mount, and one industrial/organizational psychologist, Maynard Goff. They collected ratings on 4,392 team leaders, from two direct reports, two peers, and two bosses. These team leaders were rated on a combination of leadership competencies, such as “manages execution” or “fosters teamwork” or “analyzes issues,” with a short list of questions measuring each competency, for a total of just under half a million ratings from over twenty-five thousand raters…….by slicing and dicing the data the researchers hoped to see what best explained the overall patterns, and what they found was that most of the variation in people’s scores—54 percent of it—could be explained by a single factor: the unique personality of the rater.

From the data it was apparent that each rater—regardless of whether he or she was a boss, a peer, or a direct report—displayed his or her own rating pattern. Some were very lenient raters, skewing far to the right of the rating scale, while others were tough graders, skewing left. Some had natural range, using the entire scale from one to five, while others seemed to be more comfortable arranging their ratings in a tight cluster. Each person, whether he or she realized it or not, had an idiosyncratic pattern of ratings, so this powerful effect came to be called the Idiosyncratic Rater Effect."

Idiosyncratic Rater Effect

What is the Idiosyncratic Rater Effect? “When you judge others, you don’t define them, you define yourself.” Watch Marcus Buckingham’s 2:40 video:

The word “Business Acumen” is something you may be rated on in a 360-degree-survey. Each person who rates you in a 360-degree-survey will have his own idiosyncratic definition of business acumen.

As the authors note, “The same applies to other characteristics such as influencing, decision making, or even performance. Each of these is an abstract vessel into which we pour our own unique meaning: we are not well-informed, and we are, as raters, about as effective as farmers would be estimating numbers of atoms.”

.jpg?width=300&name=9%20Lies%20and%20Truths%20About%20Work%20(Lies%20%231%20-%206).jpg) Ratings-based tools, such as annual engagement surveys, performance-rating tools, 360-degree surveys, or any other, do not measure what they purport to measure. Why?

Ratings-based tools, such as annual engagement surveys, performance-rating tools, 360-degree surveys, or any other, do not measure what they purport to measure. Why?

- Human beings can never be trained to reliably rate other human beings,

- Ratings data derived in this way is contaminated because it reveals far more of the rater than it does of the person being rated,

- Contamination cannot be removed by adding more contaminated data.

The Second Hurdle – Data Insufficiency

Reliable, variable, and valid—are the signs of good data.

The problem with almost all data relating to people—including you—is that it isn’t reliable.

Goals data reports your “percent complete”; competency data compares you to abstractions; ratings data measure your performance and your potential through the eyes of unreliable witnesses: it wobbles by itself and fails to measure what it says it’s measuring.

.png?width=300&name=Idiosyncratic%20Rater%20Effect%20BadRaterBadData%20(9%20Lies%20about%20Work).png) Until we come up with a reliable way to measure individual knowledge-worker performance—whether this means the performance of a nurse, or a software developer, or a teacher, or a construction worker—any claim about what drives performance is not valid.

Until we come up with a reliable way to measure individual knowledge-worker performance—whether this means the performance of a nurse, or a software developer, or a teacher, or a construction worker—any claim about what drives performance is not valid.

No one knows, and anyone who claims to know simply doesn’t know good data from bad.

Reliable doesn’t mean accurate. Reliable means something doesn’t fluctuate randomly.

Reliable Data – Don’t Ask Others, Ask About Ourselves

Rather than asking whether another person has a given quality, we need to ask how we would react to that other person, if he or she did. Stop asking about others, and instead ask about ourselves.

If we ask you to rate one of your team on “growth potential,” your rating is unreliable. What is growth potential, and how can you be the judge of it?

On the other hand, if we ask if you plan to promote her today, your answer is reliable.

Rating of a team member on something called “performance” is unreliable, because your definition of performance is unique to you. Contrast this with, your response to the question, “Do you turn to this team member when you want extraordinary results?” is entirely reliable.

The authors note, “you may not be able to project into her psyche and accurately perceive her growth potential, you are able to ask yourself if you plan to promote her today, and the answer you get back will be a reliable one. (When we report on our own experiences, we have all the data we need—we have perfect data sufficiency—because we’re with ourselves a lot!)”

Thus, “Your answer is exactly and only what it purports to be your subjective reaction to her, carefully measured. It is both a humbler piece of data and, at the same time, a more reliable one.”

Thus, “Your answer is exactly and only what it purports to be your subjective reaction to her, carefully measured. It is both a humbler piece of data and, at the same time, a more reliable one.”

If you’re thoroughly confused at this point, good. You should be. The point is simple, we, nor our peers, can’t reliably rate others. We can share our experience of others, but we can not rate them because when we do, we don’t define them, we define ourselves.

Growth demands Strategic Discipline.

How can you build an enduring great organization?

How can you build an enduring great organization?

You need disciplined people, engaged in disciplined thought, to take disciplined action, to produce superior results, making a distinctive impact in the world.

Discipline sustains momentum, over a long period of time, to lay the foundations for lasting endurance. It’s the framework for Good to Great:

- Stage 1: Disciplined People

- Stage 2: Disciplined Thought

- Stage 3: Disciplined Action

- Stage 4: Build Greatness

Positioning Systems is obsessively driven to elevate your teams Discipline. A winning habit starts with 3 Strategic Disciplines: Priority, Metrics and Meeting Rhythms. -1.jpg?width=300&name=3%20Disciplines%20of%20Execution%20(Strategic%20Discipline)-1.jpg) Your business dramatically improves forecasting, accountability, individual, and team performance.

Your business dramatically improves forecasting, accountability, individual, and team performance.

Creating Execution Excellence demands creating/defining, understanding, with creativity and DISCIPLINE your Flywheel.

Meeting Rhythms achieve a disciplined focus on performance metrics to drive growth.

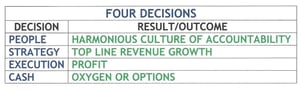

Positioning Systems helps your business achieve these outcomes on the Four most Important Decisions your business faces:

|

DECISION |

RESULT/OUTCOME |

|

PEOPLE |

|

|

STRATEGY |

|

|

EXECUTION |

|

|

CASH |

|

We help your business Achieve Execution Excellence.

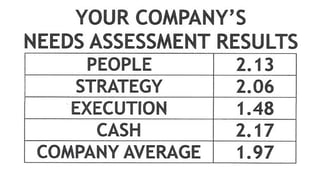

Positioning Systems helps mid-sized ($5M - $250M) business Scale-UP. We align your business to focus on Your One Thing! Contact dwick@positioningsystems.com to Scale Up your business! Take our Four Decisions Needs Assessment to discover how your business measures against other Scaled Up companies. We’ll contact you.

Next Blog – Lie #7: People Have Potential

Next Blog – Lie #7: People Have Potential

High potential is the corporate equivalent of Willy Wonka’s Golden Ticket: you take it with you wherever you go, and it grants you powers, and access denied to the rest of us. The first problem we encounter is how to measure it? You can’t. People have Potential, Lie #7 next blog.

.jpeg?width=150&height=135&name=Hand%20with%20marker%20writing%20the%20question%20Whats%20Next_%20(1).jpeg)